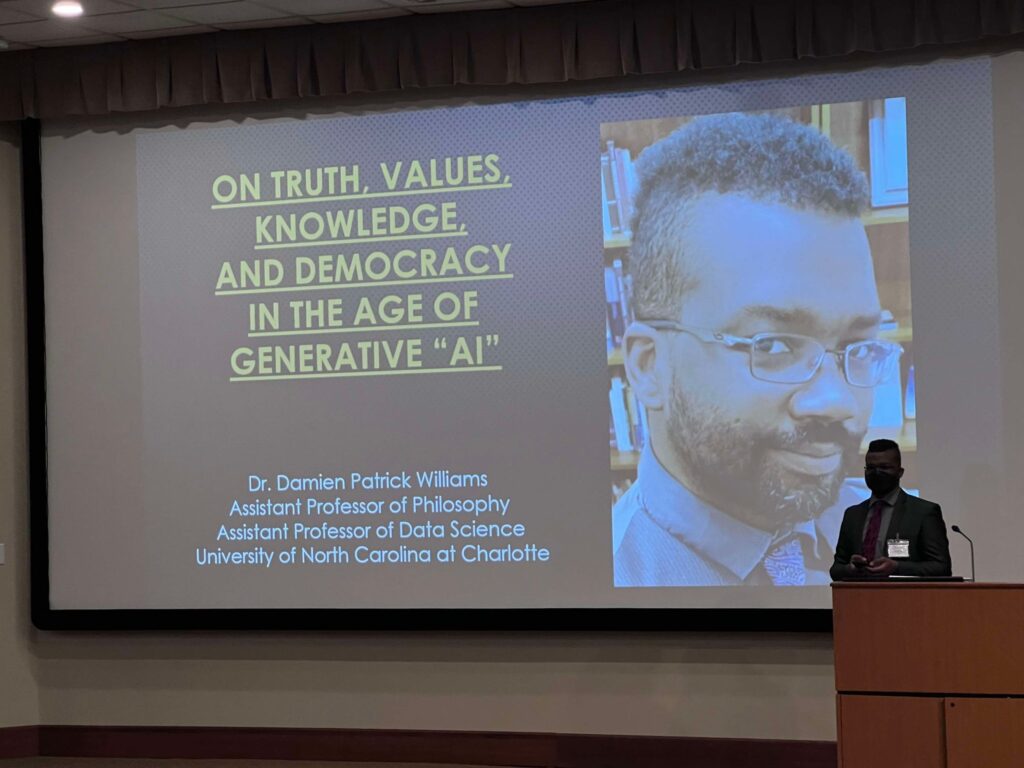

Back in October, I was the keynote speaker for the Society for Ethics Across the Curriculum‘s 25th annual conference. My talk was titled “On Truth, Values, Knowledge, and Democracy in the Age of Generative ‘AI,’” and it touched on a lot of things that I’ve been talking and writing about for a while (in fact, maybe the title is familiar?), but especially in the past couple of years. Covered deepfakes, misinformation, disinformation, the social construction of knowledge, artifacts, and consensus reality, and more. And I know it’s been a while since the talk, but it’s not like these things have gotten any less pertinent, these past months.

As a heads-up, I didn’t record the Q&A because I didn’t get the audience’s permission ahead of time, and considering how much of this is about consent, that’d be a little weird, yeah? Anyway, it was in the Q&A section where we got deep into the environmental concerns of water and power use, including ways to use those facts to get through to students who possibly don’t care about some of the other elements. There were a honestly a lot of really trenchant questions from this group, and I was extremely glad to meet and think with them. Really hoping to do so more in the future, too.

Me at the SEAC conference; photo taken by Jason Robert (see alt text for further detailed description).

Below, you’ll find the audio, the slides, and the lightly edited transcript (so please forgive any typos and grammatical weirdnesses). All things being equal, a goodly portion of the concepts in this should also be getting worked into a longer paper coming out in 2025.

Hope you dig it.

Until Next Time.